Psychometric Validation of an AI-Based Evaluation System for Identifying Discrepancies in Learning Processes

Abstract

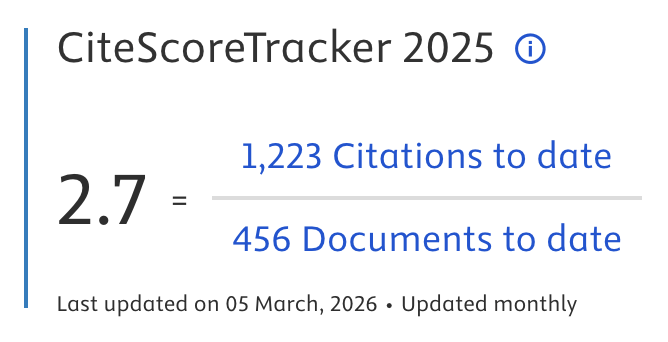

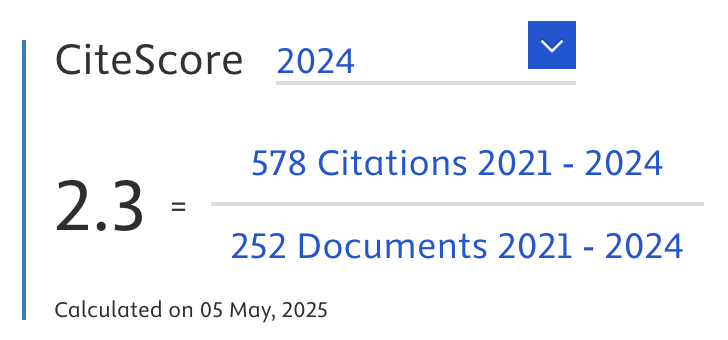

This research advances the field of educational evaluation by designing and psychometrically validating an artificial intelligence (AI)- based diagnostic tool to detect discrepancies in university learning processes. The main novelty is the integration of the Provus Discrepancy Model combined with a forward-chaining inference engine. This research aims to transform evaluation from an administrative activity to an ongoing process of improvement. The tool was developed and validated through a sequential mixed-methods approach with 400 participants from 3 state universities and 8 evaluation experts. Results from the study provide evidence that the validated system created a substantial range of psychometric characteristics. These psychometric characteristics include strong content validity (SD-CVI/Ave = 0.94); high internal consistency and reliability (Cronbach's α = 0.94); solid construct validity as demonstrated through Confirmatory Factor Analysis (CFA) (CFI = 0.94; RMSEA = 0.054) and a substantial range of predictive analytics (diagnostic learning analytics), which the AI learning analytics engine evaluated learning discrepancies with a 92.4% diagnostic accuracy (47.4% more accurate than manual evaluation methods). The system's validated usefulness is demonstrated through high system usability (SUS = 88.2); high practical utility (85% total score on the Pragmatic Utility Assessment); significant utility (real-world) practical utility (detected 45 discrepancy patterns), cost efficiency (73% cost and 67% analysis time compared to traditional methods), and a range of analytics (predictive and learning discrepancy analytics). The significant contribution of this study is the development of the world's first integrated AI evaluation system that meets high methodological and psychometric standards, along with a set of real-time diagnostic analytics. Ultimately, this study developed the first truly integrated, novel paradigm evaluation system that combined the historically established evaluation construct and mechanisms with the most advanced AI capabilities, providing educators and institutions with evaluation tools to deliver data-driven pedagogical strategies and interventions in higher education.

Article Metrics

Abstract: 115 Viewers PDF: 78 ViewersKeywords

Full Text:

PDFRefbacks

- There are currently no refbacks.

Journal of Applied Data Sciences

| ISSN | : | 2723-6471 (Online) |

| Collaborated with | : | Computer Science and Systems Information Technology, King Abdulaziz University, Kingdom of Saudi Arabia. |

| Publisher | : | Bright Publisher |

| Website | : | http://bright-journal.org/JADS |

| : | taqwa@amikompurwokerto.ac.id (principal contact) | |

| support@bright-journal.org (technical issues) |

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0

.png)